AP Syllabus focus: ‘Calorimetry experiments measure heat transfer by tracking temperature changes and applying the heat transfer equation.’

Calorimetry is the primary laboratory method for connecting measurable temperature changes to heat transfer. Careful experimental technique, thoughtful assumptions, and correct data handling determine whether calorimetry results are meaningful and reproducible.

What calorimetry measures

Calorimetry links a physical measurement (temperature change) to heat transfer during a process such as a reaction, dissolution, or mixing. The key idea is that a known (or well-characterized) material in contact with the process changes temperature, and that temperature change is used to determine the heat transferred.

Calorimetry: An experimental method for determining heat transfer by measuring temperature changes in a calorimeter and applying an appropriate heat-transfer relationship.

In typical AP Chemistry lab contexts, the “known material” is often water (because its thermal properties are well tabulated) and the “process” occurs in a container designed to reduce heat exchange with the external environment.

Calorimeter: A device (often insulated) used to contain a process and its surroundings so that heat transfer can be inferred from measured temperature changes.

Core relationship used in calorimetry

Calorimetry experiments operationalize the syllabus statement by (1) measuring initial and final temperatures and (2) applying the heat transfer equation to the material whose temperature change is measured.

= heat transferred (J)

= mass of the substance changing temperature (g)

= specific heat capacity of that substance

(°C or K)

= heat absorbed by the calorimeter hardware (J)

= calorimeter heat capacity

A positive means the measured material warmed; a negative means it cooled. The sign of follows directly from for the measured material.

Common calorimeter setups used in the lab

Coffee-cup (constant-pressure) calorimetry

A coffee-cup calorimeter (nested foam cups with a lid) is used for processes in solution.

What is directly measured is the temperature change of the solution (and sometimes the cup, stirrer, and thermometer). Key features:

Open to the atmosphere (approximately constant pressure)

Temperature change is measured with a thermometer or digital probe

Works best when the solution is well mixed and heat loss is minimized

Bomb (constant-volume) calorimetry

A bomb calorimeter is used for vigorous reactions (commonly combustion).

A bomb calorimeter setup places a sealed steel combustion bomb inside a water bucket/jacket so the reaction heat flows into a known thermal mass. The measured temperature rise of the surrounding water (and the calorimeter hardware) is then used to determine heat released at constant volume, typically via calibration of the calorimeter’s effective heat capacity. Source

Key features:

Reaction occurs in a sealed steel vessel immersed in a known mass of water

Temperature rise of the surrounding water bath is measured

Requires knowledge of the calorimeter’s overall heat capacity (often found by calibration)

How calorimetry is carried out (high-quality technique)

Planning and setup

Choose a calorimeter appropriate to the process (solution vs. combustion).

Measure masses/volumes with appropriate precision; record units consistently.

Select a temperature probe with suitable resolution; confirm it equilibrates quickly.

Assemble insulation, lid, and a stirring method to reduce temperature gradients.

Running the measurement

Record initial temperature once it is stable.

Initiate the process (mix reactants, dissolve solute, ignite sample, etc.).

Stir consistently to keep the temperature uniform throughout the solution/bath.

Record the peak (or minimum) temperature reached, or collect a temperature–time trace if available.

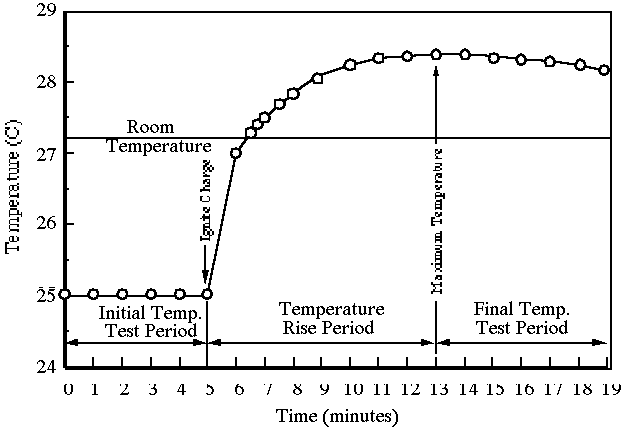

This figure shows a typical calorimetry temperature–time profile: an initial baseline, a rapid temperature change when the process begins, and a slower approach toward a maximum (or minimum) as heat exchange and probe response lag occur. Reading from a trace like this helps reduce error compared with relying on a single final reading, especially when temperature lag or heat loss is significant. Source

Data handling essentials

Compute using , keeping sign.

Use for the measured material (often the solution or water bath).

If calorimeter hardware significantly absorbs heat, incorporate .

Keep track of what refers to (solution, water bath, calorimeter hardware). Clear labeling prevents sign and interpretation errors.

Assumptions and limitations that matter

Calorimetry depends on practical approximations. Results are strongest when these are at least approximately true:

Minimal heat exchange with the external environment (good insulation, lid, short run time)

Uniform temperature in the measured material (effective stirring)

Known heat capacity values for the material(s) whose temperature is tracked (or a calibrated )

No unaccounted side processes (evaporation, splashing loss, incomplete reaction, gas escaping with significant heat)

Major sources of error and how labs reduce them

Heat loss/gain to the room: use a lid, increase insulation, avoid long delays, and keep hands off the cup.

Temperature lag and overshoot: stir steadily; use a probe with fast response; record temperature continuously when possible.

Inaccurate mass/volume: use calibrated balances and volumetric glassware when needed.

Nonuniform mixing: add reagents consistently and stir to eliminate hot/cold pockets.

Neglecting calorimeter heat capacity: for higher-precision work, calibrate the calorimeter or include in calculations.

FAQ

A common approach is calibration by mixing hot and cold water of known masses and initial temperatures.

You assume the net heat change of the water plus the calorimeter is approximately zero, then solve for $C_\text{cal}$ from the observed final temperature.

Water has a well-known, relatively large specific heat capacity, so temperature changes are easier to measure accurately.

It is also inexpensive, chemically compatible with many ionic solutions, and its thermal properties are widely tabulated.

If you record temperature continuously, you can extrapolate the cooling/warming trend back to the mixing time.

This helps estimate the “true” peak (or minimum) temperature that would have occurred with perfect insulation.

Key features include:

Fast response time (reduces lag)

Fine resolution (e.g., $0.01,^{\circ}\mathrm{C}$ rather than $0.1,^{\circ}\mathrm{C}$)

Good calibration and stability over the temperature range used

Without stirring, the temperature may differ near where mixing occurs versus near the probe.

Consistent stirring reduces temperature gradients, making the recorded temperature closer to the true average needed for reliable use of $q = mc\Delta T$.

Practice Questions

(2 marks) In a coffee-cup calorimeter, of solution warms from to . Assuming , calculate for the solution.

1 mark: Calculates .

1 mark: Uses correctly to obtain (accept 941 J) with positive sign.

(6 marks) Describe how you would carry out a calorimetry experiment to determine the heat released or absorbed when two aqueous solutions are mixed in a coffee-cup calorimeter. Include how you would minimise errors and how the temperature data are used with .

1 mark: Mentions insulated cup(s) with lid (coffee-cup calorimeter) and thermometer/probe.

1 mark: Measures and records masses/volumes of solutions and initial temperature(s).

1 mark: Mixes solutions in the calorimeter and stirs to ensure uniform temperature.

1 mark: Records maximum/minimum temperature (or temperature–time data) to obtain .

1 mark: Uses with appropriate and (often approximated as water) to calculate heat change of the solution.

1 mark: Error reduction stated (any one: lid/insulation to reduce heat exchange, prompt mixing and measurement, consistent stirring, probe resolution/fast response, avoiding splashing/evaporation).